Everyone in your company has, by now, tried using ChatGPT for work. They’ve had it draft an email, summarise a doc, write a JIRA ticket. The model is confident, articulate, and — when the question is generic — useful.

And then someone asks it: “What’s our home office stipend?” “What’s the on-call rotation policy?” “What ISO controls apply to this process?”

The model doesn’t know. Of course it doesn’t. It was trained on the public internet, not on your company’s handbook, your runbooks, your quality SOPs, or the slide deck someone made for the all-hands two years ago. So your team does what they always did: they leave the AI tab and go digging through SharePoint, Confluence, Slack search, that one Notion page Jana made, or — most of the time — they ask a colleague who’s probably busy.

The fix isn’t a smarter chatbot. It’s a chatbot that actually knows your company. That’s the entire premise of an enterprise AI assistant — an LLM grounded in your own knowledge, answering every question with a citation back to the source.

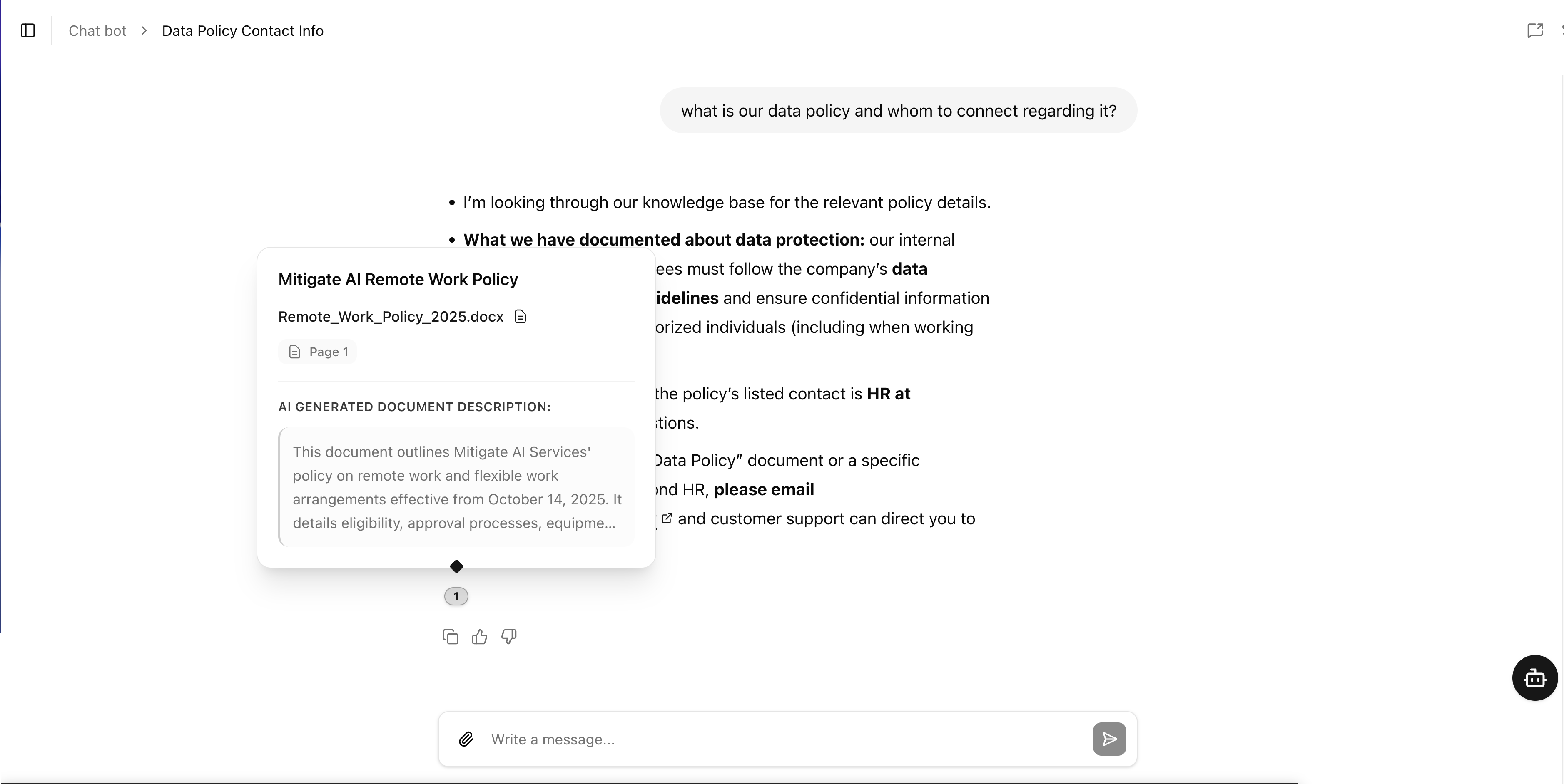

An employee asks: “What’s our data policy and whom do I contact about it?” The assistant doesn’t guess. It pulls the answer from your internal documents and shows exactly which file and which page the answer came from — every claim traceable back to the source.

What “grounded in your company knowledge” actually means

The technical term for this is retrieval-augmented generation. The plain-English version is short:

- Connect the assistant to your knowledge sources — the SharePoint sites, the Confluence space, the shared Drive folder, the Notion workspace, the file folder on someone’s laptop, the wiki nobody has updated since 2023.

- Index everything by meaning, not keyword. Every document gets broken into chunks and stored in a vector database, so the assistant can find “the bit about home office stipends” even if the user asked “is there a budget for my desk chair?”

- Ground every answer in the retrieved snippets. When someone asks a question, the assistant pulls the relevant passages, generates the answer from those, and cites the source documents so anyone can click through and verify.

The result is the simplest possible product: a chat box where every employee can ask anything about how the company works, and get a fast, accurate, sourced answer. No more “I think it’s in the handbook somewhere.” No more pinging the same overworked colleague. No more guessing.

Inside the knowledge base

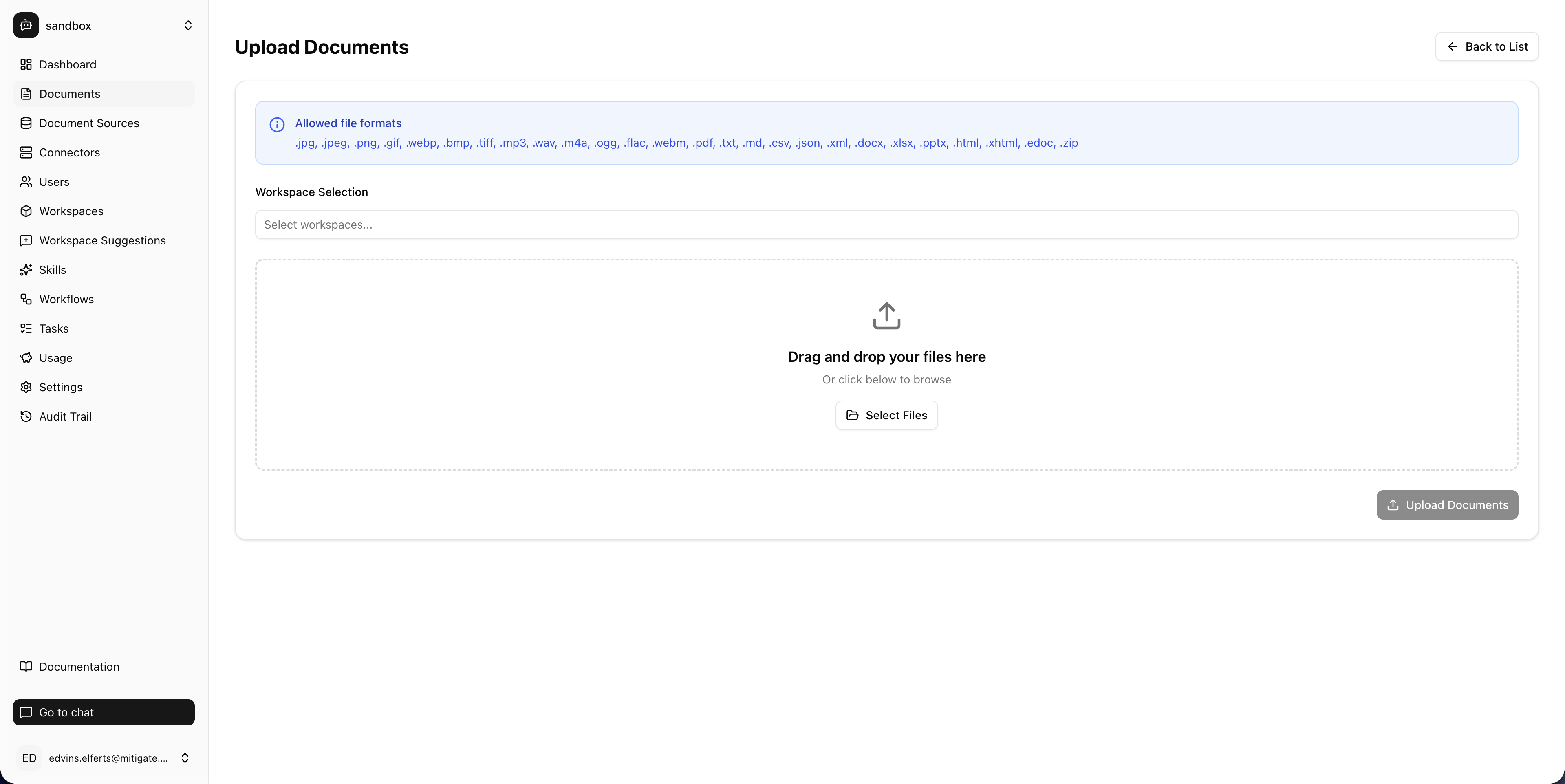

The thing that makes or breaks an enterprise assistant is whether it can actually ingest the messy reality of how your company stores knowledge. Not just clean Markdown in a tidy wiki — the real stuff. The 80-page PDF policy doc. The PowerPoint from the all-hands. The Excel sheet someone uses as a database. The voice memo from the customer interview. The DOCX everyone keeps editing.

Files don’t just sit there. Each one runs through a five-stage pipeline: load the content, chunk it into searchable segments, enrich it with an AI-generated title, description and locale, add contextual processing so each chunk carries its surrounding context, and finally vectorize it for semantic search. That’s why the assistant can find the right paragraph in a 200-page handbook from a question someone phrased in a completely different way.

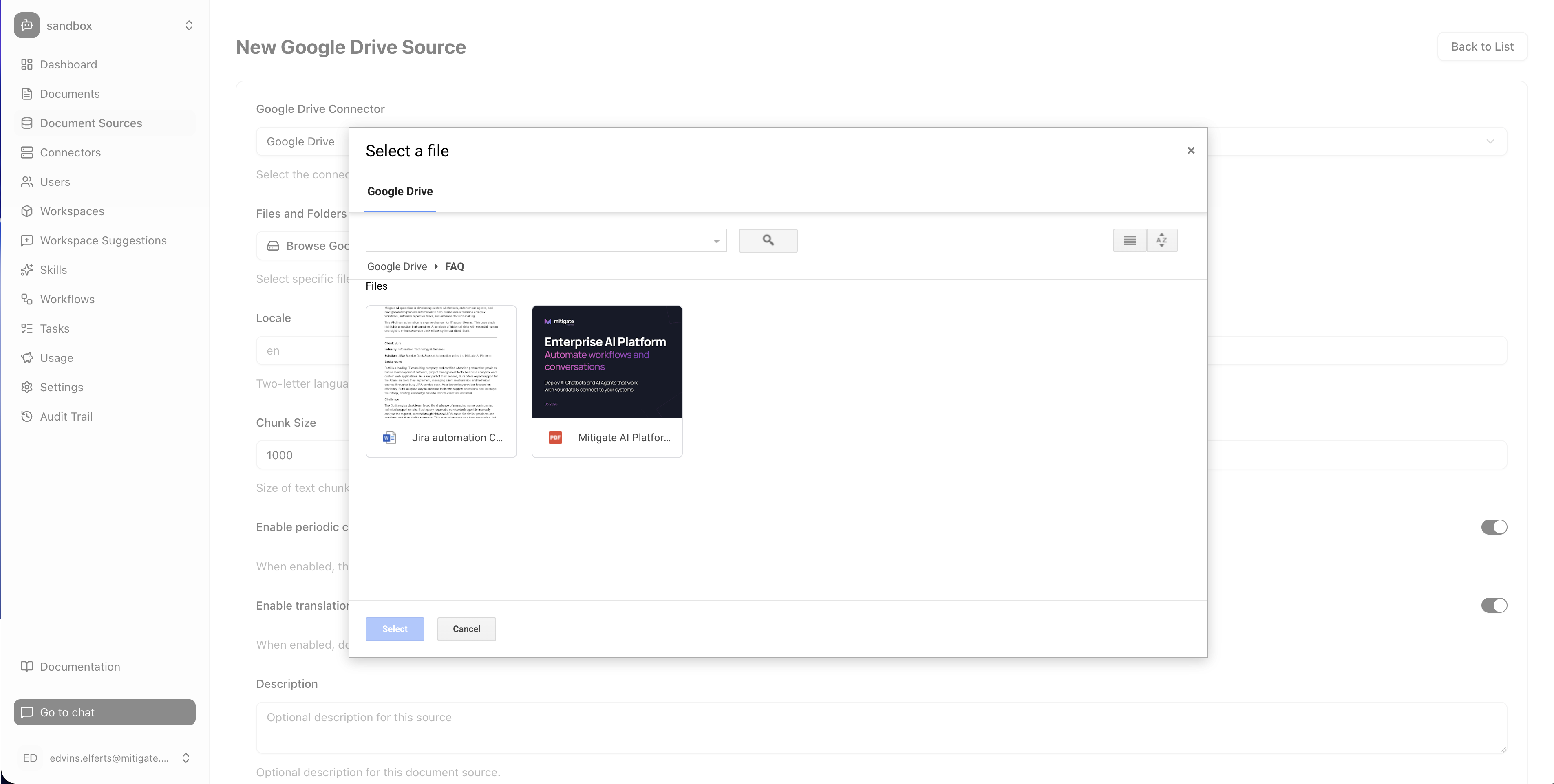

You don’t have to upload everything by hand. The platform connects directly to the systems where your knowledge already lives, and keeps itself in sync as those systems change.

A few platform details worth calling out, because they tend to matter the moment a real organisation tries to operate one of these:

- Periodic re-crawling. Documents change. Sources are re-imported automatically (daily by default), so an answer never drifts out of date because someone updated a policy and forgot to re-train the bot.

- Authenticated sources. Web crawls support Basic Auth, session cookies, bearer tokens, API keys, and JavaScript rendering — so the assistant can index gated knowledge bases the same way an employee would read them.

- Translation. Mixed-language documents can be translated into a single base language during ingestion, so a Latvian policy and an English procedure both answer questions in whichever language the employee asks in.

- Workspace scoping. Documents and sources are pinned to specific workspaces. The HR knowledge base lives in the HR workspace; the engineering runbooks live in the engineering workspace; the assistant in each only sees what it’s allowed to see.

One assistant, three useful flavours

The pattern is the same in every deployment — connect knowledge, retrieve, ground, cite. What changes is which knowledge, and which audience. In our work, three flavours of enterprise AI assistant come up over and over:

Policies, processes, "how do I…"

HR, IT, finance and operations questions. Onboarding rules, expense limits, travel booking, benefits, on-call rotations, password resets. The first place every employee asks instead of pinging a person.

Onboarding and continuous training

Trained on your courseware, certifications, product training, technical handbooks. New hires ramp up in days, not months. Existing staff stop re-asking the same questions every time the product changes.

Standards, SOPs, audit-ready answers

ISO 9001, ISO 27001, GDPR, regulated processes, internal SOPs and runbooks. Quality and compliance teams stop being the human lookup table and become reviewers of an assistant that gets the cite right every time.

Same platform underneath. Different knowledge base, different audience, different access controls. Most companies start with one and add the others as adoption proves itself.

See the helpdesk assistant in action

Here’s what it looks like in production. A backend engineer starts a new role on Monday. By Wednesday they have five typical new-hire questions: what to focus on this week, whether there’s a home office budget, how business travel is booked, how the on-call rotation works, whether there’s a learning budget for a Kubernetes certification.

Five questions that would normally mean five Slack messages to five different people. Instead, they open one chat — and in two minutes get back structured answers with exact policy references, step-by-step instructions, and tables tailored to their situation.

Zoom out and the impact is the same in every flavour: HR stops repeating itself, learning teams stop fielding the same five training questions, quality teams stop being the human lookup table. The hours that used to disappear into “where is X documented?” come back.

How the Mitigate AI platform implements an enterprise assistant

Building a demo on top of an LLM is a weekend project. Building an enterprise assistant your security team will sign off on is where the work lives. Here’s what makes a Mitigate AI assistant production-ready, not demo-ready.

Plugs into the knowledge sources you already have. Documents stay where they live. The assistant connects to your existing repositories, indexes their content, and stays in sync as things change. We support the platforms most enterprises actually run on:

Citations on every answer. The assistant doesn’t just say “your home office budget is €500.” It says it, then shows you exactly which document and which section the answer came from, with a click-through link. If the source document changes, the answer changes. If the assistant can’t find the answer, it says so — instead of inventing one.

Role-based access, baked in. Engineering can’t see HR’s sensitive HR docs. Junior staff can’t see the board pack. The assistant respects whatever permissions already exist in the underlying systems — and adds its own per-team scoping on top so you can deploy the same platform for the helpdesk, the learning team, and quality without any of them seeing each other’s knowledge.

Secure access through your existing identity provider. The assistant signs employees in through your single sign-on (Entra ID, Okta, Auth0, Google Workspace, Keycloak). Each conversation is attributed to a real, named user inside the existing audit trail your IT and security teams already trust.

Pick the LLM you trust. OpenAI, Anthropic, Google, Mistral, OpenRouter — switchable per workspace, no retraining required. If your compliance team needs an EU-hosted model, or your CISO has a strong opinion, you’re not locked in.

Same agent platform, scoped down. The enterprise assistant runs on the same platform that powers our agents and our e-commerce assistants. The same governance, the same observability, the same approval workflows for any action it takes. You’re not buying a third tool — you’re running a third use case on infrastructure you already trust.

What changes when this lands in your organisation

A few things move at once, and they reinforce each other:

- HR, IT, and operations stop repeating themselves. The same ten questions that used to fill the inbox stop arriving — because the assistant answered them at 2am with a citation.

- New hires stop asking “where is X documented?” and start asking “how do I do X?” — the difference is days versus months of ramp time.

- Quality and compliance teams stop being the human lookup table. When the assistant can answer “does this process meet ISO 27001 control A.8.2?”, those teams move from triage to oversight.

- Knowledge stops dying when the senior person leaves. Anything captured in a document stays accessible the way it always should have been.

- Confidence in answers goes up. Because every answer has a source, “the assistant told me” becomes “the assistant told me, and here’s the policy section.” That’s a different conversation with auditors, with regulators, and with your own team.

How to get started with the Mitigate AI assistant

You don’t need to index everything to start. The fastest path is to pick one flavour and one knowledge base, and prove it on a contained audience before broadening. A few good starting points:

- The HR/IT helpdesk that gets the same ten questions every week. Index the handbook, the IT runbook, the benefits portal — and watch the inbox shrink.

- The onboarding programme. A learning assistant trained on your onboarding material gets new hires productive in a fraction of the usual time.

- The quality / compliance reference. ISO and SOP teams use the assistant to surface the exact clause for any process question — and audit prep stops being a fire drill.

Pick one. We’ll handle the connectors, the indexing, the role-based access, and the rollout. Get in touch.

The bottom line

A generic chatbot answers from a generic internet. An enterprise AI assistant answers from your company — with the citations to prove it. Same chat box, completely different conversation: one that finally treats your handbook, your runbooks, your SOPs and your decisions as the ground truth they were always supposed to be.

Ready to give your team a single place to ask anything about how the company works? Get in touch and let’s talk.